Title: Love or Hate? Share or Split? Privacy-Preserving Training Using Split Learning and Homomorphic Encryption

Authors: Tanveer Khan, Khoa Nguyen, Antonis Michalas and Alexandros Bakas

Research Artifact: https://github.com/khoaguin/HESplitNet

Venue: Proceedings of the 20th Annual International Conference on Privacy, Security & Trust (PST’23), Copenhagen, Denmark, August 21—23, 2023.

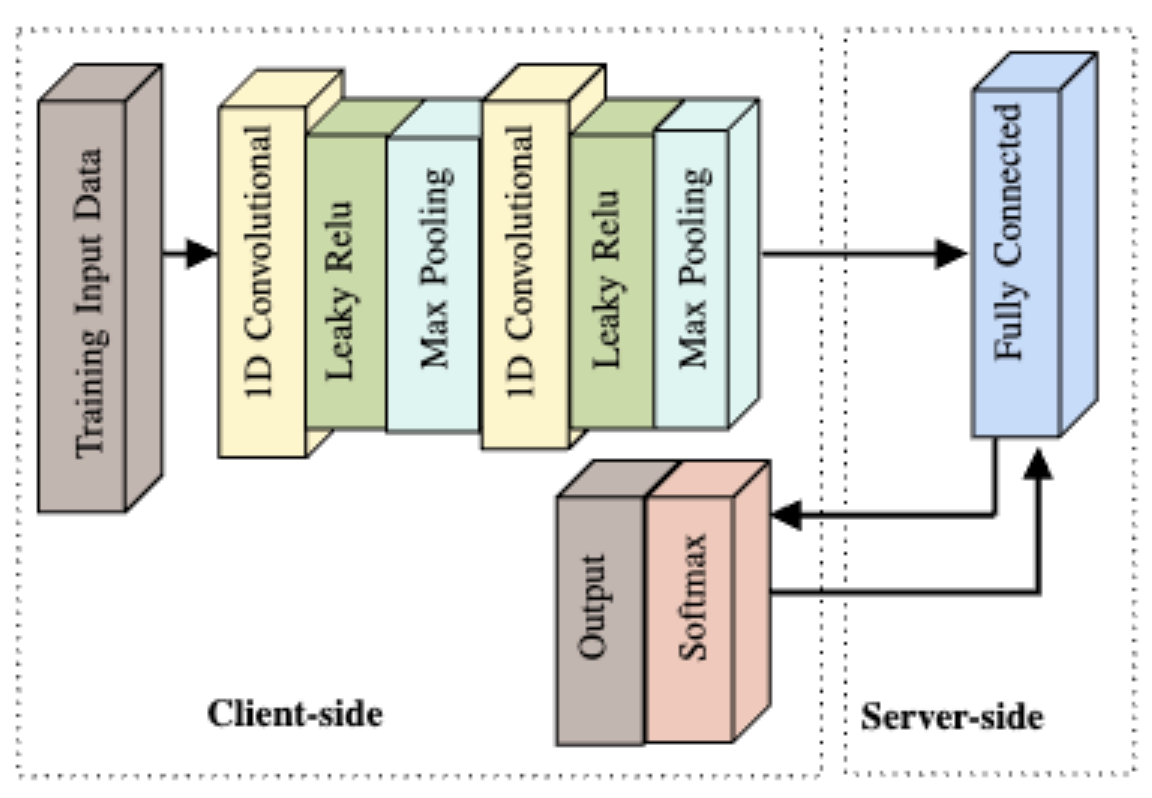

Abstract: Split learning (SL) is a new collaborative learning technique that allows participants, e.g. a client and a server, to train machine learning models without the client sharing raw data. In this setting, the client initially applies its part of the machine learning model on the raw data to generate activation maps and then sends them to the server to continue the training process. Previous works in the field demonstrated that reconstructing activation maps could result in privacy leakage of client data. In addition to that, existing mitigation techniques that overcome the privacy leakage of SL prove to be significantly worse in terms of accuracy. In this paper, we improve upon previous works by constructing a protocol based on U-shaped SL that can operate on homomorphically encrypted data. More precisely, in our approach, the client applies homomorphic encryption on the activation maps before sending them to the server, thus protecting user privacy. This is an important improvement that reduces privacy leakage in comparison to other SL-based works. Finally, our results show that, with the optimum set of parameters, training with HE data in the U-shaped SL setting only reduces accuracy by 2.65% compared to training on plaintext. In addition, raw training data privacy is preserved.