Haptics-enabled virtual reality (VR) interface for medical analysis, using a VR headset and a force-feedback device. Providing the user with flexible and natural view angles and realistic touch feeling. Implementing medical functions including object manipulation (rotate, pan, zoom), drawing, measurement, cutting, and drilling.

Thermal Haptics through XR Technology

This work presents an experiential showcase demonstrating how dynamic thermal rendering based on conductivity enables users to distinguish materials beyond simply detecting hot or cold objects. By incorporating a practical conductivity model with thermal decay, the system generates richer and more realistic thermal effects over time, highlighting perceptual differences between objects that share the same temperature. The project also showcases the integration of WEART thermal gloves within a VR environment to deliver nuanced haptic feedback. Overall, the interactive demonstration illustrates the potential of conductivity-based thermal rendering to enhance realism, material perception, and information transfer in haptic VR experiences.

SocialCompass - Guiding self-regulation in high-arousal moments with ubiquitous sensing

Current research is limited by its reliance on single data modalities, controlled lab settings, and emotion classification rather than actionable support. To address these gaps, a novel zero-shot inference approach leverages ImageBind’s cross-modal capabilities to compare live sensor embeddings with conceptual text anchors representing emotional arousal. The system is designed for individuals with social anxiety or autism, professionals in high-stress communication settings, and anyone seeking greater emotional awareness. It aims to build social confidence through discreet real-time feedback, support composure during stressful interactions, and foster deeper self-awareness of emotional and physiological patterns.

Haptics and XR for medical 3D imaging

Multimodal Handwriting Learning Application

Using “Stick-slip” phenomena the demonstration illustrates how it is possible to move / control tangible objects linkage free on a touchscreen surface. The demonstration device uses a generic Microsoft Surface tablet and stylus to teach participants various handwriting tasks and test their accuracy in replicating various shapes and characters.

Virtual and Physical Ice-Hockey Interface

This artificial system uses the “Stick-slip” phenomena to merge physical and virtual interaction into the same space in real time. The demonstration uses a physical (representation) of an ice hockey goal-keeper that can interact within a virtual playground environment. The unique system has an artificially generated virtual puck which the physical goal-keeper tries to block in real-time. This demo shows how it is possible to generate physical forces on a virtual object and how a virtual object can affect a physical entity seamlessly.

3D Momentary Display

A temporary display created using vaporized liquid suspension containing microscopic (reflective) particles which can be ionized when brought in-contact with (a specific) spectrum of light. Using various frequency of lasers (outside the visible spectrum) it is possible to project a 3D object (in simplified vertices & edges) that can interact with the vaporized liquid to display rudimentary shapes.

(WIP) Multimodal In-Vehicle Infotainment System Interaction (HapticAuto Project)

The set of demos explore novel interaction experiences using In-Vehicle systems for current and future autonomous vehicles. The demos include:

- Light Operated Vibrotactile Screen

- 4CAPS: Quad Channel Air Pulse Generator

- Pneumatic Sub-woofer based tactile touchscreen Interaction

- Linear Tactile Screen Guard (Vertical Force Actuation on Ridged Glass Displays)

- Longitudinal Screen Excited with Tangential Actuation

- Fukoku Haptic Seat with rotation haptic cue

- Haptic Seat + with vertical actuation

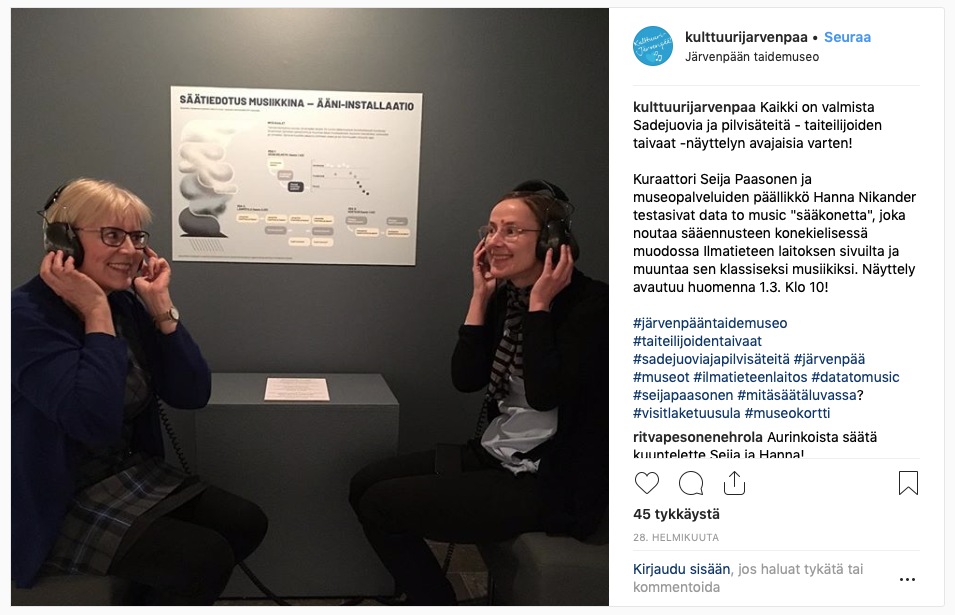

Weather sonification installation

We built a sonification demonstration in Järvenpää art museum. It plays you the latest weather forecast in the form of a five-minute piece of classical music. This demo was part their weather-themed art installation, displayed from March to September, 2019.

In this installation, our software periodically fetches the latest 24 hour weather forecast for Järvenpää area from the web service of FMI, Finnish Meteorological Institute. It then analyses the weather data, transforms it into MIDI music, and then turns that MIDI data into a piece of classical music, rendered by a synthetic orchestra.

Hear an excerpt of the weather forecast for Järvenpää from February 26, 2019:

Sonification is the branch of science that studies turning data into musical forms. Our Data-to-Music project analysed data from various sources – industrial control, fitness and sports, crane maintenance – and developed ways to turn that data into music. The goal was to see if the human ear can identify patterns in data that otherwise remain opaque to our senses. This could enable new ways to approach human-computer interaction with these systems.