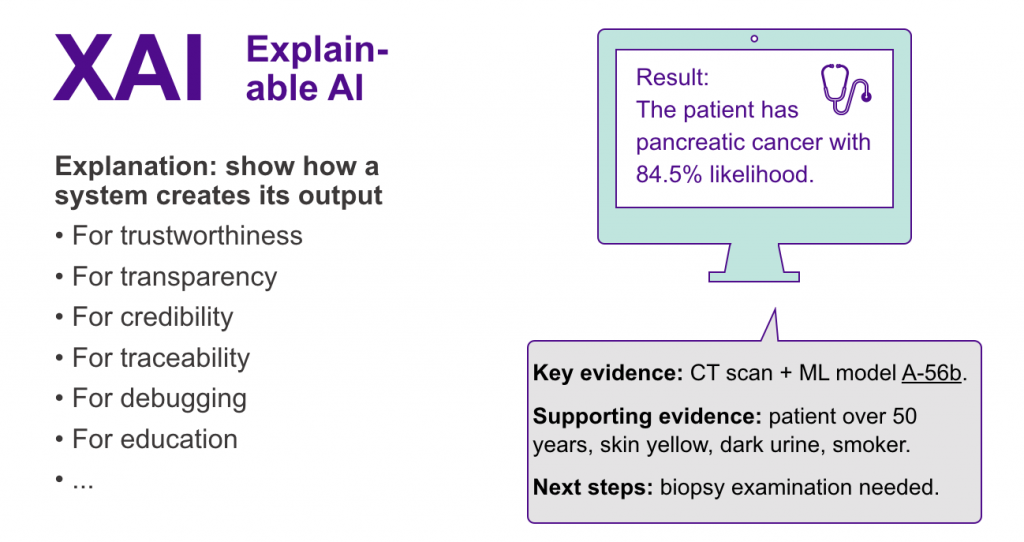

The DPI research project aims at co-creating and developing innovative technical solutions for medical and surgical use. The project begins with a co-creation phase, which is followed by an co-innovation phase where virtual reality, artificial intelligence and deep learning technologies are applied. The focus is both on creating a new channel for communication and on creating new value for radiology. Interpreting three-dimensional body tissues through two-dimensional image slices takes the doctors years to learn. This project produces more realistic, immersive 3D models with AR and VR technologies. These technologies provide surgeons and other medical staff with new visual data.

DPI is funded by Business Finland.